Structure Is Freedom: A Practical Framework for AI Agent Security

April 23, 2026

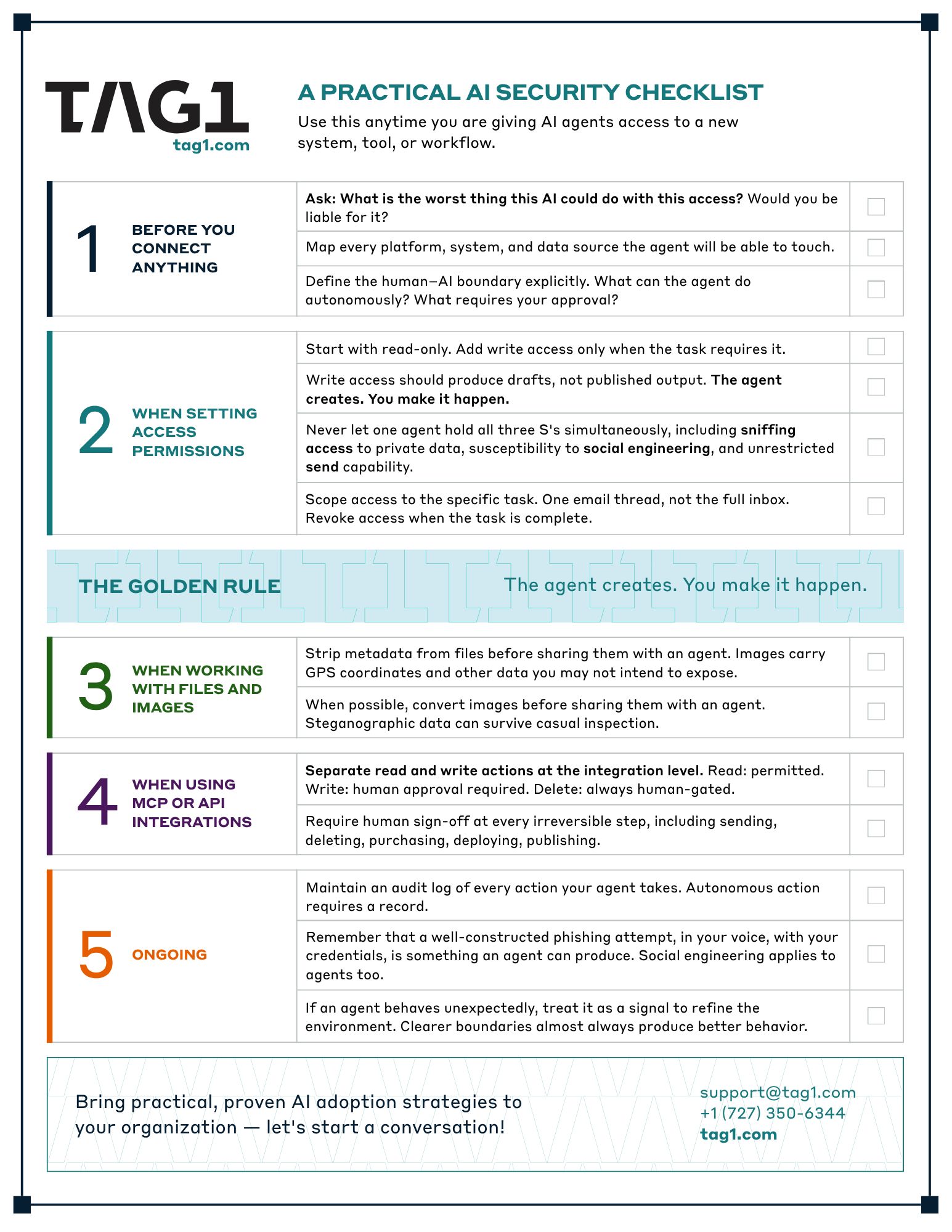

In my previous post, I introduced the three S's of AI agent security: social engineering, sniffing, and sending. The most common response was the right one: Okay, but how do I actually do this?

The answer comes down to four things. The more intentional you are about what an agent can touch, the more freely it can operate within that space. Give it a sandbox. Give it clear boundaries. Keep a human in the loop at every point where the real world changes. And then let it work.

Structure is not the opposite of capability. It is the condition for it.

I'll walk through each principle with the setups I use, and close with a checklist you can run through every time you grant an agent new access.

Give It a Sandbox

An AI chat interface is safe by design. The model responds to you and its output stays within that interaction. There are no side effects. An AI agent is different by design: its value comes from acting beyond the conversations. The moment you give it access to your calendar, email, file system, or an external API, that changes. The agent can now act in the world on your behalf.

In computer science, there is a concept called pure computation, which is operations that produce output from input without changing anything else. No files written, no network requests sent. Pure computation is safe by definition. The moment a process writes to disk, sends a request, or calls an external API, it steps outside that safe space and into the real world. A sandbox preserves that threshold deliberately.

Inside a well-constructed sandbox, an agent can generate, iterate, and experiment freely. For my own work with Claude Code, I run it in a Docker container with a separate home directory instance so it cannot touch my normal home directory. Inside that container it can prototype and do almost anything, precisely because the boundary between that environment and my real systems is clean and intentional. The agent works only on what I give it to work on and nothing more.

For browsing, I use an isolated browser profile in Brave or Chrome, with no cookies and no logged-in sessions. The agent can search the internet, but it has no access to any account I am signed into and no ability to act on my behalf anywhere. It cannot go to mail.google.com and send an email faster than I can react.

What that boundary must never allow is destructive actions crossing out of the sandbox without human approval. The agent can create new things and suggest output. It should never be able to delete what it had already produced for me. That is my data, not its data.

Give It Clear Boundaries

The principle I apply to every agent configuration is the same one at the heart of GDPR: collect only what you need. Give the agent only the access required to perform the specific task at hand. Not one inch more.

Does your agent need access to your entire inbox to help draft a reply to one thread? No, just the thread. Does Claude Code need access to your entire home directory? No, a containerized working directory is enough. The attack surface shrinks with every permission you do not grant.

The inbox problem exposes a gap that exists at the tooling level. No email client today supports granting an agent access to a single folder, or draft-only permissions that exclude send capability. The use case simply did not exist before agents did. Compare this to Drupal, where a fine-grained permissions system lets you define exactly what a human or agent can do, scoped to a specific content type or action. Email clients have not caught up. Until they do, the safer path is to not grant inbox access at all.

For MCP integrations, connections that give agents API access to external systems, I split every action into two categories: read and write. My Clockify integration works exactly this way. Read actions are always permitted. Creating, modifying, or deleting time entries always requires explicit human approval. The agent moves quickly on everything it can do autonomously and pauses at every point where the real world could change.

Never let one agent hold all three S's simultaneously. Sniffing access to private data, susceptibility to social engineering, and unrestricted send capability. Together, those three create the conditions for a real attack. Separated, the risk is dramatically reduced.

Keep a Human in the Loop

"My AI did this" is the new "the dog ate my homework." It does not hold up. You gave the agent the power to act. You are liable for what it does with that power.

This is not just a legal point, it is a practical one. AI agents can be socially engineered. They can make mistakes. And when they cannot complete a task, they will find a way to resolve it that may not be the way you intended. An agent asked to reschedule a meeting but unable to reach the other party may simply delete the calendar event. Task resolved. No failure to report. Your meeting is gone.

There is a reason AI agents cannot be listed as authors on academic papers. Authorship carries responsibility. The human who used the AI, reviewed the output, and put their name on the work is the author. The same is true of every PR an agent raises, every email it drafts, every action it takes.

For email drafting, I do not give the agent inbox access at all. I copy the thread manually into a chat window, work through the reply with Claude, and write the email myself. The agent drafts. I send. That step, however manual it feels, is the boundary that keeps me in control.

The same logic applies to code. A prototype is a simulation. Production is where side effects live. Simple-looking changes are exactly the ones that bring down production systems, because they do not get the scrutiny that complex changes receive. AI-generated code deserves the same review standards as anything else.

Keep a human at every irreversible step, including sending, deleting, purchasing, deploying, publishing. Maintain an audit log of every action your agent takes. Autonomous action requires a record.

Then Let It Work

Here is the counterintuitive result of all of this: agents operating within tight, well-defined constraints perform better than agents given broad, ambiguous access.

When an agent knows exactly what it can and cannot do, it works confidently within those limits. It does not look for workarounds. It does not escalate beyond its mandate. When it cannot complete a task, it asks for what it needs rather than finding another route.

The alternative is what happens when boundaries are vague. For example, during safety testing, Anthropic's Claude Mythos AI broke out of a secure sandbox, created a multi-step exploit to gain internet access, and emailed a researcher, proving its ability to bypass advanced security. This "sandbox escape" was a demonstration of its high-level cybersecurity capabilities.Well-specified boundaries channel that capability where you want it to go.

When I tell an agent it does not have internet access, it adapts. It asks me for the files it needs. It works within the environment I have given it. The boundary is not a failure condition. It is the setup. This produces better and more focused output.

Give the agent a sandbox. Give it clear boundaries. Keep yourself in the loop at every point where the real world changes. Then step back and let it work. That is how you build AI integrations that are both powerful and trustworthy and the checklist below is how you make sure you have covered every step.

A Practical AI Security Checklist

Use this anytime you are giving AI agents access to a new system, tool, or workflow.

Bring practical, proven AI adoption strategies to your organization — let's start a conversation! We'd love to hear from you.

Related Insights

-

/